The Complete Evolution of Seedance: From 1.0 to 2.0, What Has ByteDance's AI Video Model Experienced?

A Comprehensive Guide to Seedance 1.0, 1.5 Pro, and 2.0: Pros, Cons, and Core Upgrades

If you follow AI video generation, you must have heard of Seedance—the domestic model from ByteDance that has completed three major iterations over the past year. My website has integrated its first three versions, 👉 Z-Video AI Video Generation Tool. Today, let's take a comprehensive look back at how this product, dubbed the "King of Domestic AI Video," has evolved step by step.

Origin: The Sprouting of a Seed

The name Seedance is interesting—Seed (Seed) + Dance (Dance), implying "seed dancing," the process from seed to growth.

As early as 2023, ByteDance initiated the R&D of the Seedance prototype, but it was only used for internal testing at the time. The real turning point came in early 2025 when the ByteDance Seed team welcomed a new leader, Wu Yonghui, and the productization process began to accelerate. Six months later, the 1.0 version was officially unveiled.

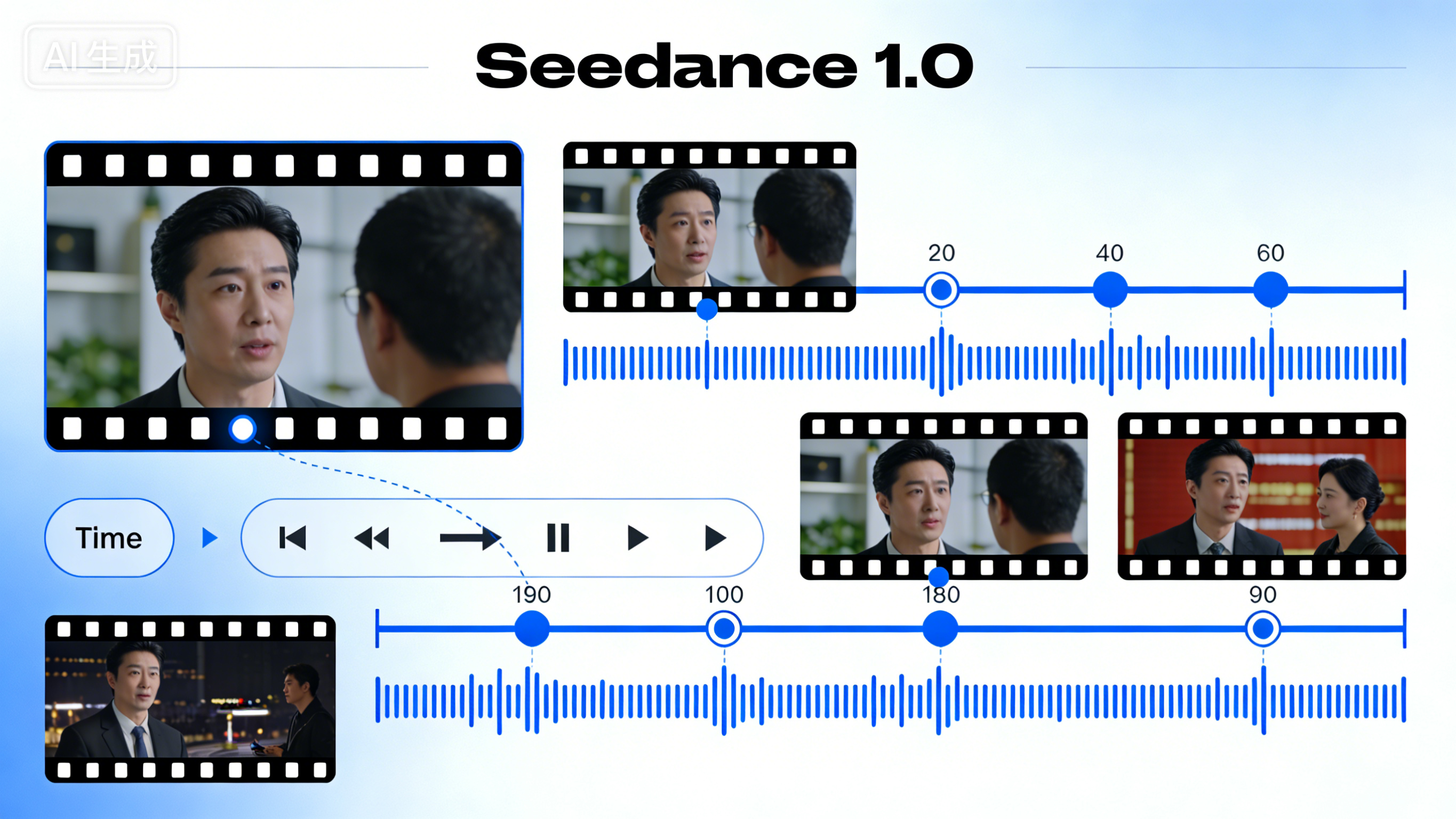

Seedance 1.0: The Breakthrough from 0 to 1 (June 2025)

As the foundational work, the core task of 1.0 was to solve the question of "whether it can be generated."

What did it achieve?

- Supports text and image input to generate 10-second 1080P videos with 2-3 scene transitions.

- Generating a 5-second video takes only 41.4 seconds (L20 test environment).

- Possesses native multi-shot narrative capability, enabling natural transitions between long, medium, and close-up shots.

Real-world Performance: It understands basic camera language, with good dynamic effects like running and splashing water. However, the limitations are obvious—the generation duration is basically controlled within 10 seconds, requiring multiple rounds of "card drawing" to get satisfactory results, and its ability to handle complex physical interactions is limited.

One-sentence Summary: It proved this path is viable, but it's not yet stable.

Seedance 1.5 Pro: The Breakthrough in Audio-Visual Synchronization (December 2025)

Six months later, the 1.5 Pro version completed an "auditory revolution."

Core Breakthrough: Native Audio-Video Joint Generation

- Adopts a Dual-Branch Diffusion Transformer (MMDiT) architecture for synchronous video and audio generation.

- Achieves millisecond-level audio-video synchronization with precise lip-sync.

- Supports multi-person, multi-language dialogue (including Chinese dialects).

Narrative Capability Upgraded Simultaneously: Enhanced semantic understanding, capable of cinematic camera control (long takes, dolly zoom, etc.), and can precisely capture motion details and character emotions.

Limitations: Its positioning is still a "production tool" rather than a "world simulator," and it falls short of Sora in complex physical simulation.

One-sentence Summary: The visuals aren't real enough yet, but the audio is synced.

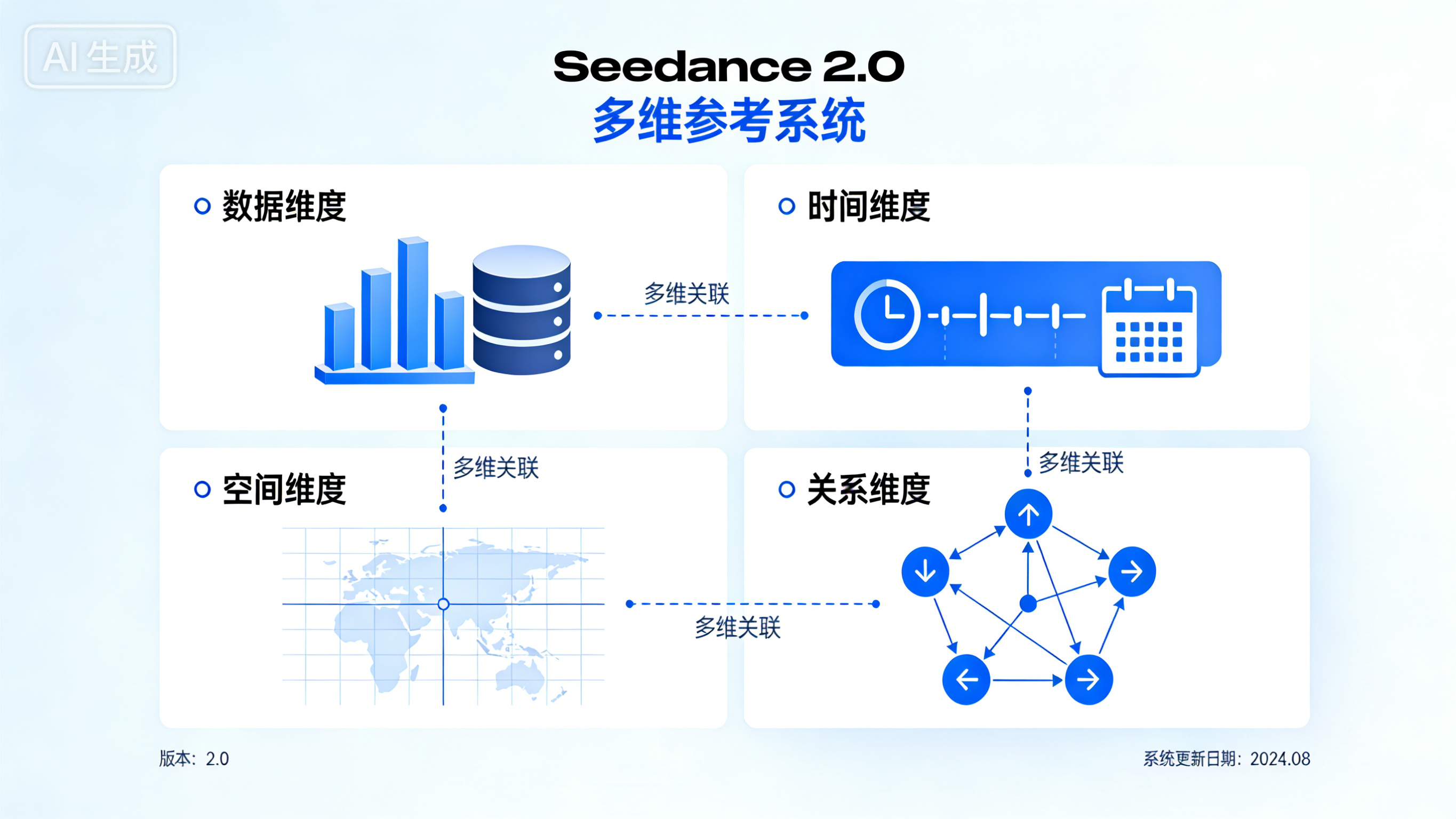

Seedance 2.0: The All-Rounder Director-Level King (February 2026)

The latest 2.0 version brings an "usability revolution."

Epoch-Making Breakthrough: Multi-Dimensional Reference System

- Supports uploading up to 9 images, 3 videos, and 3 audio clips simultaneously as references.

- Introduces an "@ Reference System": allows precise specification within prompts of which character from which image or which action from which video to use.

- Allows adjustment of the "influence weight" of each reference material to achieve fine-grained control.

Consistency Breakthrough: Solves the biggest pain point of AI video—maintaining facial features and clothing details unchanged during multi-shot transitions. It has transformed from "card drawing" to a "predictable production tool."

Technical Upgrade: Generating 2K video is about 30% faster than similar models, supports multi-scene sequence generation, and automatically breaks down shots (wide shot - medium shot - close-up).

Current Limitations: Complex physical effects still have shortcomings (liquid flow, fabric wrinkles), long videos suffer from "memory decay" requiring manual editing, and the "real person material reference" feature has been suspended due to ethical risks.

One-sentence Summary: It's starting to listen to human instructions, but it hasn't fully understood the physical world yet.

Quick Overview of Versions

| Version | Release Time | Core Capabilities | One-sentence Summary |

|---|---|---|---|

| Seedance 1.0 | June 2025 | Text-to-Video / Image-to-Video, Multi-shot transitions | It works, but it's unstable |

| Seedance 1.5 Pro | Dec 2025 | Audio-Video Joint Generation | The audio is synced |

| Seedance 2.0 | Feb 2026 | Multi-modal Reference, Director-level Control | It's starting to understand prompts |

My website has integrated the first three versions of Seedance, witnessing every step of this domestic AI video model from its awkward beginnings to maturity.

Although the "real person material reference" feature in 2.0 has been suspended, its core capabilities—director-level control, strong consistency, and native audio-video generation—have already transformed AI video from a "toy" into a "tool."